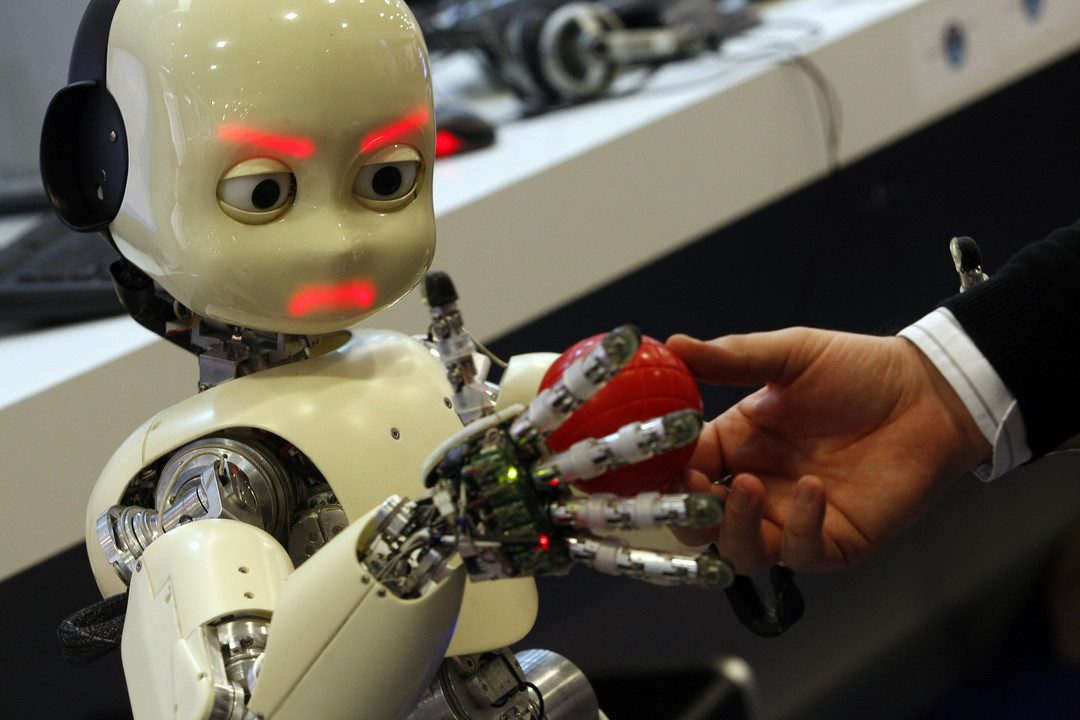

Efficient Visuo-Haptic Object Shape Completion for Robot Manipulation

L. Rustler, J. Matas and M. Hoffmann, “Efficient Visuo-Haptic Object Shape Completion for Robot Manipulation,” 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Detroit, MI, USA, 2023, pp. 3121-3128, doi: 10.1109/IROS55552.2023.10342200.

Abstract

For robot manipulation, a complete and accurate object shape is desirable. Here, we present a method that combines visual and haptic reconstruction in a closed-loop pipeline. From an initial viewpoint, the object shape is reconstructed using an implicit surface deep neural network. The location with highest uncertainty is selected for haptic exploration, the object is touched, the new information from touch and a new point cloud from the camera are added, object position is re-estimated and the cycle is repeated. We extend Rustler et al. (2022) by using a new theoretically grounded method to determine the points with highest uncertainty, and we increase the yield of every haptic exploration by adding not only the contact points to the point cloud but also incorporating the empty space established through the robot movement to the object. Additionally, the solution is compact in that the jaws of a closed two-finger gripper are directly used for exploration. The object position is re-estimated after every robot action and multiple objects can be present simultaneously on the table. We achieve a steady improvement with every touch using three different metrics and demonstrate the utility of the better shape reconstruction in grasping experiments on the real robot. On average, grasp success rate increases from 63.3 % to 70.4 % after a single exploratory touch and to 82.7% after five touches. The collected data and code are publicly available (https://osf.io/j6rkd/, https://github.com/ctu-vras/vishac).

Leave a comment